I’ve been blogging a whole lot about documentation lately; I truly believe all automated documentation is better than just having people enter data manually. My company uses IT-Glue as a documentation system. IT-Glue is a very cool system but has some huge API limitations. For example; You’re allowed to make 10 requests per second and 10,000 requests per day. These limitations can get pretty bad if you manage a lot of workstations or servers that upload data at the same time.

After my previous blogs the comment I’ve received most was worries about the API key. If they key gets stolen you’re giving away the keys to the castle. The API has no limitations and with a leaked key all your documentation could be download. I’ve been discussing this issue with IT-Glue for some time but haven’t gotten a real solution yet. This has forced me to look for a solution myself. I gave myself some requirements for the solution.

- The solution needed to be simple and accessible for everyone.

- The solution needed to have multiple levels of authentication; an API key, IP whitelisting, and organization whitelisting.

- The solution needed to block requests for all passwords/files/etc for all organisations.

- The solution needed to allow some form of handling of the API rate limiting, e.g. repeating a request if it was rate limited.

- The solution needed to be able to used, without adapting any scripts (except URLs and API codes.)

So after some research I decided to use an Azure Function for this. I’ve blogged about Azure Functions before, but the main reason is that running this function in the consumption model will cost us nothing (or next to nothing if you are an extremely heavy user.)

Setup

This time we will not use the Azure Function to only run a script but act as a “middleware” for the IT-Glue API. Follow this guide to set up your Azure function App. The only difference is that we select “PowerShell” as our runtime language. Do not continue at “Create an HTTP triggered function” as we’re going to be inserting our own function.

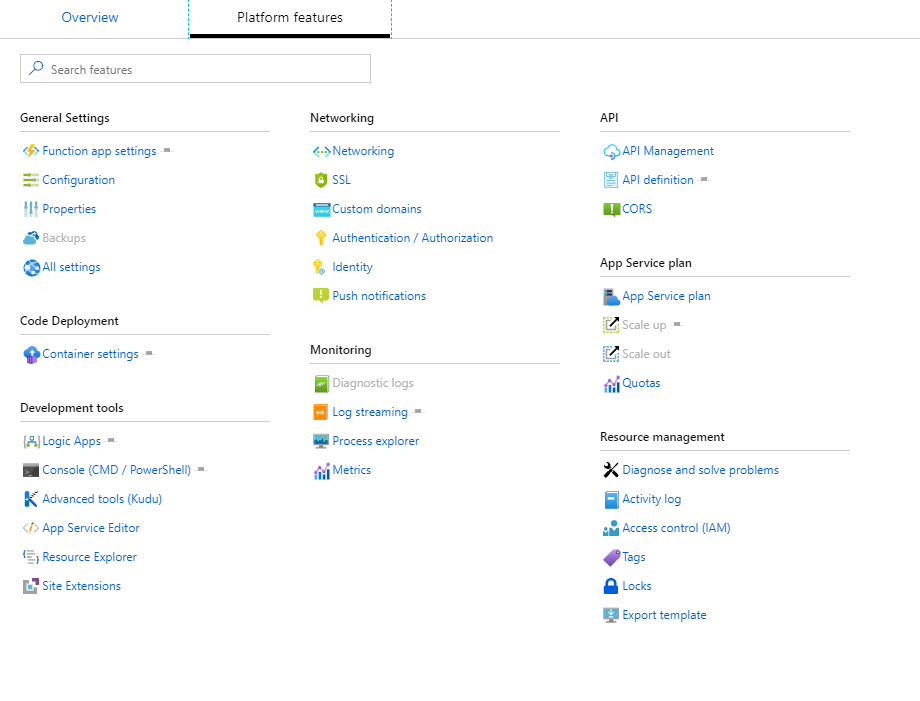

When the Function App has been deployed click on your Function’s name and then on “platform features”. You should be presented with the following screen

In this screen click on “Configuration” – We’re going to be adding some configuration options here that are used in our scripts. Add the three following items:

In this screen click on “Configuration” – We’re going to be adding some configuration options here that are used in our scripts. Add the three following items:

- AzAPIKey: This will be the new API key you will enter on all your scripts that will upload data to IT-Glue. Generate a password for this or enter one of choice.

- ITGlueURI: This is the current IT-Glue API url you use, most likely https://api.itglue.com or https://api.eu.itglue.com

- ITGlueAPIKey: Your current API key. This is the only location that this API key will be used from now on.

After this you can return to the overview page and click the + symbol next to the “Functions”, Choose the “HTTP trigger” option. Name the HTTP trigger “AzGlueForwarder” and choose the Anonymous Authorisation level. This is because we are going to take care of authentication on the script level and not at the Azure Function level. After creating the function you’ll be presented with a script page. Paste the following script:

AZGlueForwarder

using namespace System.Net

param($Request, $TriggerMetadata)

#Check if AZapiKey is correct

if ($request.Headers.'x-api-key' -eq $ENV:AzAPIKey) {

#Comparing the client IP to the Organization list, and checking if it exists.

$ClientIP = ($request.headers.'X-Forwarded-For' -split ':')[0]

$CompareList = import-csv "AzGlueForwarder\OrgList.csv" -delimiter ","

$AllowedOrgs = $comparelist | where-object { $_.ip -eq $ClientIP }

if (!$AllowedOrgs) {

Push-OutputBinding -Name Response -Value ([HttpResponseContext]@{

headers = @{'content-type' = 'application\json' }

StatusCode = [httpstatuscode]::OK

Body = @{"Error" = "401 - No match found in allowed list" } | convertto-json

})

exit 1

}

#Sending request to ITGlue

$resource = $request.url -replace "https://$($ENV:WEBSITE_HOSTNAME)/API/", ""

#Replace x-api-key with actual key

$ITGHeaders = @{

"x-api-key" = $ENV:ITGlueAPIKey

}

$Method = $($Request.method)

$ITGBody = $($Request.body)

#write-host ($AllowedOrgs | out-string)

$SuccessfullQuery = $false

$attempt = 3

while ($attempt -gt 0 -and -not $SuccessfullQuery) {

try {

$ITGlueRequest = Invoke-RestMethod -Method $Method -ContentType "application/vnd.api+json" -Uri "$($ENV:ITGlueURI)/$resource" -Body $ITGBody -Headers $ITGHeaders

$SuccessfullQuery = $true

}

catch {

$ITGlueRequest = @{'Errorcode' = $_.Exception.Response.StatusCode.value__ }

$rand = get-random -Minimum 0 -Maximum 10

start-sleep $rand

$attempt--

if ($attempt -eq 0) { $ITGlueRequest = @{'Errorcode' = "Error code $($_.Exception.Response.StatusCode.value__) - Made 3 attempts and upload failed. $($_.Exception.Message) / Resource was $($ENV:ITGlueURI)/$resource" } }

}

}

#Checking if we can strip the data that does not belong to this client.

#Important so passwords/items can only be retrieved belonging to this organisation.

#Can't do it for all requests, such as get-organisation, but for senstive data it works perfectly. :)

if ($($ITGlueRequest.data.attributes.'organization-id')) {

write-host ($AllowedOrgs.ITGlueOrgID)

$ITGlueRequest.data = $ITGlueRequest.data | where-object { $_.attributes.'organization-id' -in $($AllowedOrgs.ITGlueOrgID) }

}

#Sending the final object back to the client.

Push-OutputBinding -Name Response -Value ([HttpResponseContext]@{

headers = @{'content-type' = 'application\json' }

StatusCode = [httpstatuscode]::OK

Body = $ITGlueRequest

})

}

else {

Push-OutputBinding -Name Response -Value ([HttpResponseContext]@{

headers = @{'content-type' = 'application\json' }

StatusCode = [httpstatuscode]::OK

Body = @{"Error" = "401 - No API Key entered or API key incorrect." } | convertto-json

})

}

Save the script and use the right-hand menu to add a file to the function. Call this file “OrgList.csv”. This is the database that will be used to check which IP’s are allowed to upload data, and for which organisations they can retrieve data.

IP,ITGlueOrgID

1.1.1.1,123456

2.2.2.2,123457

Next click on “Integrate” and select the allowed methods, in our case we want all methods selected for the IT-Glue API. Replace the “Route template” with “{*path}”.

Click on AzGlueForwarder once more and press “Get Function URL” and copy this URL up to the {PATH} part. This will be the URL you will put in place of the API endpoint variable in your scripts. e.g. “https://AzureFunctionITGlue.azurewebsites.net/api/”.

And that’s it! A small recap:

- Create the Azure Function

- Add the environment variables AzAPIKey ITGlueBaseURI,ITGlueAPIKey.

- The function URL will be your new IT-Glue API url to put in your scripts

- The AzAPIKey is the key to put in your script.

- The IT-Glue API key will only remain at the Azure Function side.

- The OrgList.CSV file should contain your client’s their IP’s and allowed organisation.

- your API requests can only be used for the organisations defined in OrgList.CSV.

- When an API call fails, the script will try again 3 times, each with a random wait between 1 and 10 seconds to prevent rate limiting from getting in the way.

It’s a fairly simple but clean solution while I try to work with our friends at IT-Glue to increase the API limitations. It also helps on the security side as no one will be able to just download your entire database.

That’s it for today. As always, Happy PowerShelling.